- Lil' Signals

- Posts

- Lil’ Signals: The Translation Problem in an Age of Infinite Output

Lil’ Signals: The Translation Problem in an Age of Infinite Output

Why the real advantage is no longer creation, it’s judgment, trust, and interpretation

👋🏾 Hey There!

Lil’ Signals is your go-to newsletter for decoding the cultural currents shaping our world. Powered by Nichefire, we break down cultural trends, tell compelling stories, and share actionable insights on how to tap into the power of cultural listening.

Stay ahead of the curve, one signal at a time.

This week, I want to talk about a shift that feels bigger than AI content, bigger than tools, and honestly bigger than productivity.

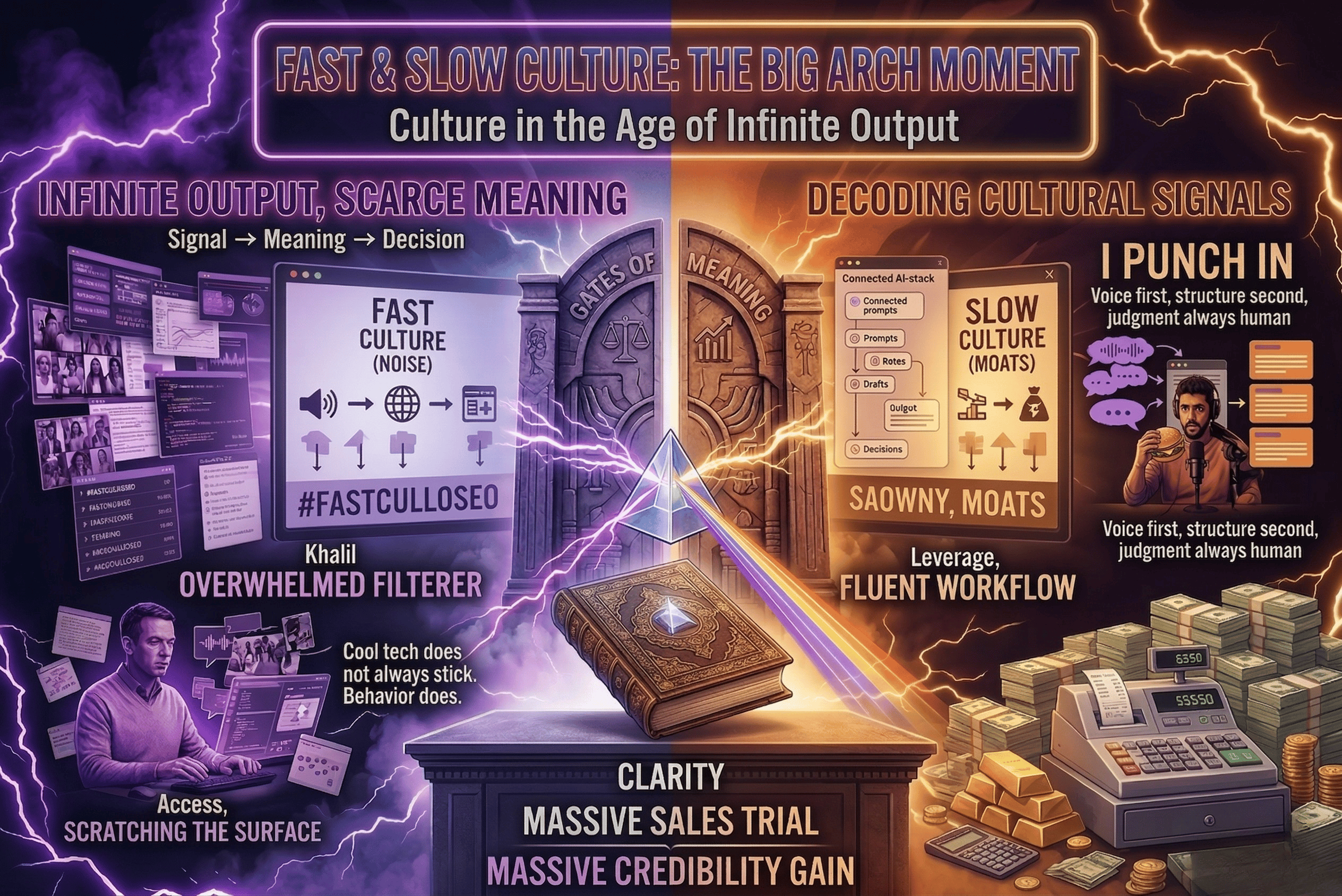

We are entering a world where output is becoming cheap, fast, and endless.

Words, images, music, summaries, strategy docs, synthetic advice, synthetic people, synthetic creativity, all of it can now be produced at a scale that would have sounded ridiculous a few years ago.

But here’s the part I cannot stop thinking about:

More output does not automatically create more trust.

More content does not automatically create more meaning.

And more capability does not automatically create real adoption.

So the real advantage is moving.

It is shifting away from pure creation and toward something else:

Translation.

The people who win in this next era will not just be the people who can generate more.

They will be the people who know what to do with what gets generated.

Let’s dive in!!

Table of Contents

StoryTime

StoryTime: When Output Stops Being the Advantage

For a long time, the bottleneck was production.

Could you write the article, design the visual, make the video, generate enough ideas, keep up with the pace of the internet?

That used to be the challenge.

Now the challenge is shifting.

The machine can make the first draft. Sometimes the second. Sometimes ten versions before your coffee gets cold.

At first, that feels like magic. I get it because I use it too.

When these tools start working for you, they feel like a cheat code. You move faster, test more ideas, get unstuck quicker. The appeal is obvious.

But speed does not solve judgment.

It gives judgment more to do.

That is the real shift.

We are not running into a shortage of content. We are running into a shortage of trust, taste, and interpretation.

Infinite output does not equal infinite value.

That is where people are still getting fooled. When a tool gets better, we assume the market will accept whatever it produces. But people do not adopt capability just because it exists.

They adopt what feels useful, safe, legible, trustworthy, and aligned with how they already behave.

That difference matters right now.

Take the AI skills gap.

To me, the immediate divide is not replacement. It is fluency.

Some people already know how to use these tools in a way that compounds. They can prompt, shape, test, refine, stack workflows, and extract leverage. Other people are still scratching the surface.

And that gap widens fast.

I feel it myself when I switch platforms. I can be highly fluent in one environment, then step into another and feel the friction immediately. The upside may be there, but fluency takes reps. Familiarity creates speed. Speed creates confidence. Confidence creates experimentation. Then the early adopters start pulling away.

That is why I keep coming back to the same point:

The real AI gap right now is fluency.

A lot of people are not behind because the technology is unavailable. They are behind because they do not yet know how to turn capability into workflow.

And I am not outside that shift, I am inside it.

I use AI every week, a lot. Not as a gimmick, and not to avoid thinking, but as part of how I work.

I have written before about the human in the loop and the Army of One, and this article sits in that same lane. I even built an OpenClaw assistant that helps me develop this newsletter. My process is simple: I punch in. I sit down, talk to the computer, dictate notes, dump reactions, and work through what I am seeing in real time. Then I use AI to help structure the mess, organize the signal, and shape it into the Lil’ Signals format.

But the thinking is still mine. The point of view is still mine. The judgment is still mine.

That matters because AI did not replace my voice. It helped me recover my process.

What used to take me a week can now take a couple of focused hours. Not because the machine is smarter than me, but because it helps me stay in motion long enough to reach clarity.

That is the part people miss when they talk about AI like it is only a threat or only a cheat code.

For a lot of us, it is neither.

It is leverage. It is scaffolding. It is a thought partner that helps close the gap between what you know, what you mean, and what you can actually ship, as long as the human stays in the loop.

And that matters far beyond individual productivity.

It matters for teams, leadership, and companies trying to figure out whether they are truly innovating or just getting louder.

Because cool tech does not always stick.

Behavior does.

A flashy product can dominate the conversation for a week and still never become part of daily life. A beautiful demo can go viral and still fail to build a durable habit. Something can be objectively impressive and culturally rejected at the same time.

We are already seeing that.

Some AI use cases stick because they reduce friction in real life. They save time, reduce blank-page anxiety, and help people iterate faster.

Others are stuck in a strange middle ground where the capability is real, but the trust is not.

That trust problem is showing up everywhere: in branded imagery, where polished perfection starts to feel suspicious; in consumer tech, where automation can feel less like convenience and more like manipulation; in chatbots, where people drift toward systems for advice and emotional support even though those systems are built to mirror, flatter, and oversimplify; in music and media, where novelty gets attention but authenticity still earns loyalty.

That is why I do not think this moment is really about AI replacing culture.

I think it is about AI exposing what culture values when output is no longer scarce.

And what people value, over and over again, is not just creation.

It is judgment.

Who can tell what is actually good?

What is useful?

What is risky?

What people will trust?

What is a cool demo, and what is a real behavior shift?

That is translation work.

Translation turns noise into signal. It turns capability into adoption. It turns a flood of material into something people can actually use.

And in a world where everyone can generate, interpretation becomes premium.

That is the opportunity.

For strategists, the job becomes less about collecting more material and more about making sharper meaning.

For marketers, the advantage is not just producing faster. It is understanding what people will believe, repeat, and act on.

For insights teams, the win is not handing over a pile of outputs. It is helping the organization understand which behaviors matter and what they point to next.

For leaders, raw capability is not the same as durable adoption.

That distinction will separate the real winners from the people who are technically advanced but strategically confused.

Because in an age of infinite output, the rarest thing is not content.

It is clarity.

The Bottom Line

We are moving from a world defined by content scarcity to one defined by meaning scarcity.

That changes the value chain.

The winners will not just be the people who use AI. They will be the people who know how to direct it, question it, refine it, and translate its output into trust, relevance, and behavior change.

The future may belong to people who can create.

But it will reward the people who can interpret.

See you next week,

Khalil

Want the unfiltered version? Catch me live on Twitch.

Let’s explore the power of culture, one signal at a time.

Lil’ Surfing 🌊

Lil Surfing started as a catch-all: a place to stash the strange, funny, and culturally loud. And it still is, but now it’s powered by Firesearch.

Each week, I’ll be dropping a short culture scan: a peek into what’s bubbling beneath the surface, based on live searches from Nichefire’s system. You’ll still get the weird. You’ll just get it with a sharper edge.

This week, I ran a Firesearch on “AI fluency,” “synthetic trust,” “durable adoption,” and “creator behavior” and here’s what surfaced:

AI fluency is moving up the org chart

One of the strongest signals in the scan was executive training. AI fluency is no longer just a practitioner problem, it is becoming a leadership one. Once executives get more comfortable with AI, the conversation shifts fast, from experimentation to optimization, budget pressure, and getting more work done with leaner teams.

The real gap is widening between users and non-users

There is a growing divide between people learning these tools and people still resisting them. Every month, fluent users get faster, more confident, and more embedded in the workflow. Everyone else falls further behind. This feels less like a niche technical skill and more like basic worker literacy, the same way computer skills became table stakes.

Synthetic trust is getting messier

People are asking AI for personal advice. Companies are using synthetic audiences and AI-shaped data in ways most consumers probably do not fully see. Add in transparency debates, provenance laws, and cybersecurity anxiety, and the signal is clear: synthetic systems are scaling faster than public trust in them.

Durable adoption is where the hype gets tested

The demos are polished. The promise is big. But when agentic systems hit real enterprise workflows, friction shows up fast. The signal here is that durable adoption will not come from flashy AI theater. It will come from tools that actually work inside messy organizations, with real teams and real constraints.

Creator behavior is not pulling back, it is accelerating

AI use in editing, ideation, coding, and content production is not slowing down. It is becoming normal behavior. That means the volume of content is only going up, and the creators who know how to orchestrate these tools will keep gaining leverage. AI is starting to look a lot more like the computer or smartphone shift: not optional, just increasingly built into how people work.

🔗 Watch the live demo walkthrough

💬 Got a theme you want me to run for a month? Reply with your pick.

💬 Your journey into the world of cultural insights starts here!

Thank you for being part of the Lil’ Signals community. Together, we’ll decode the world, one signal at a time.

Reply